Introduction

Artificial Intelligence (AI) has rapidly evolved from a promising concept into a transformative force that impacts almost every facet of our lives. From healthcare and finance to transportation and entertainment, AI technologies are reshaping industries and enhancing efficiency. However, with this great power comes an even greater responsibility. The ethical and societal implications of AI are profound, demanding that we embrace the principles of responsible AI to ensure that AI continues to benefit humanity rather than harm it.

Understanding Responsible AI

Responsible AI is a multifaceted concept that encompasses the ethical, legal, and social dimensions of AI development and deployment. It centers around the idea that AI systems should be designed, developed, and used in ways that align with human values and adhere to fundamental principles like fairness, transparency, accountability, and privacy. Let’s delve deeper into these key components of responsible AI.

- Fairness:

Ensuring that AI systems do not exhibit bias or discriminate against individuals or groups based on race, gender, age, or other protected characteristics is paramount. Developers must work diligently to identify and rectify biases in training data and algorithms to create equitable AI solutions.

- Transparency:

Transparency is essential for building trust in AI systems. Developers should provide clear and understandable explanations of how AI systems make decisions, allowing users and stakeholders to comprehend the reasoning behind AI-driven outcomes.

- Accountability:

Accountability involves assigning responsibility for the actions and consequences of AI systems. Developers, organizations, and governments must establish clear lines of responsibility and accountability to address issues that may arise from AI deployments.

- Privacy:

Protecting user data and privacy is a fundamental ethical principle. Responsible AI systems should adhere to strict data protection standards and minimize the risk of misuse or unauthorized access to personal information.

Best Practices

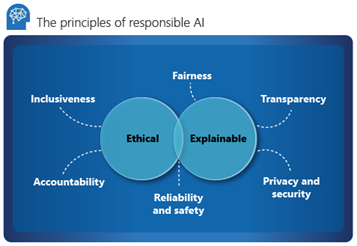

Large companies like Microsoft are offering training on best practices for Responsible AI. See this Learn article https://learn.microsoft.com/en-us/azure/cloud-adoption-framework/innovate/best-practices/trusted-ai where they say essentially the above in a slightly different way, breaking it down into two areas of Ethical and Explainable with a total of six key principles that fit under these two areas.

They have also published a framework for how to build AI systems responsibly https://blogs.microsoft.com/on-the-issues/2022/06/21/microsofts-framework-for-building-ai-systems-responsibly/ Here they state that:

The need for this type of practical guidance is growing. AI is becoming more and more a part of our lives, and yet, our laws are lagging behind. They have not caught up with AI’s unique risks or society’s needs. While we see signs that government action on AI is expanding, we also recognize our responsibility to act. We believe that we need to work towards ensuring AI systems are responsible by design.

Why Responsible AI Matters

- Mitigating Bias and Discrimination:

AI systems can inadvertently perpetuate societal biases present in training data. Responsible AI aims to minimize these biases and ensure that AI-driven decisions are fair and impartial.

The framework from Microsoft highlights how they have attempted to improve Fairness in Speech-to-Text Technology by expanding their data collection efforts and engaging with sociolinguistic experts to reduce error rates of this technology for diverse populations.

- Building Trust:

Trust is essential for the widespread adoption of AI technologies. When individuals can trust AI systems to make unbiased, transparent, and accountable decisions, they are more likely to embrace these technologies.

Microsoft’s framework has recognized that in order to be trustworthy in regards to Fit for Purpose and Azure Face Capabilities it was better to retire capabilities that inferred emotional states and identity attributes such as gender, age, smile, facial hair, hair, and makeup.

- Ethical Considerations:

AI systems increasingly make decisions that affect individuals’ lives, such as loan approvals and medical diagnoses. Responsible AI ensures that these decisions are made ethically and do not harm vulnerable populations.

Microsoft’s framework ensures that Appropriate Use Controls for Custom Neural Voice and Facial Recognition are in place by performing a Sensitive Uses review in order to ensure that only acceptable use cases are allowed access to the services which can use these technologies.

- Legal Compliance:

As AI becomes more prevalent, legal frameworks are emerging to govern its use. Organizations that adhere to responsible AI practices are more likely to comply with existing and future regulations.

Implementing Responsible AI

- Ethical AI Development:

Organizations should adopt ethical AI development practices, emphasizing fairness, transparency, and accountability from the initial design stages. Ethical considerations should be integrated into every step of the AI development process.

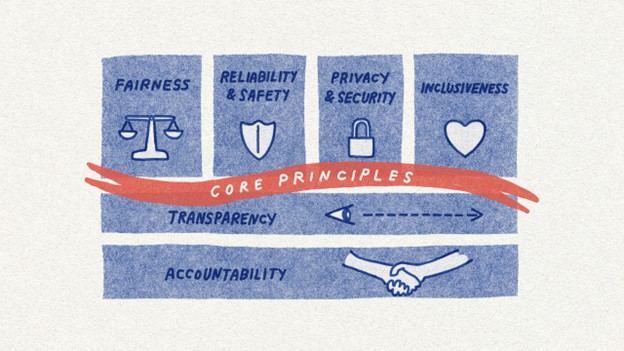

Microsoft has made such Responsible AI Standards part of their Core Principles. Although they view their efforts so far as a significant step, it is just one step. As they make progress with implementation, they expect to encounter challenges that require adjustments to be made. Thus Microsoft’s standard will remain a living document, evolving as needed.

Microsoft has made such Responsible AI Standards part of their Core Principles. Although they view their efforts so far as a significant step, it is just one step. As they make progress with implementation, they expect to encounter challenges that require adjustments to be made. Thus Microsoft’s standard will remain a living document, evolving as needed.

- Continuous Monitoring:

AI systems should be continuously monitored and evaluated for bias, discrimination, and unintended consequences. Regular audits and assessments help identify and rectify issues as they arise.

- Collaboration and Education:

The AI community, including developers, researchers, policymakers, and users, must collaborate to develop and promote responsible AI practices. Education and awareness initiatives are crucial for spreading knowledge about responsible AI.

Barnes Business Solutions is helping to contribute to promoting responsible AI for its audience with blogs and articles on this topic. We also promise to continue to monitor the subject and provide you with periodic updates. Of course you should also monitor the way you use AI in your business, and make sure you are doing so responsibly. If you have concerns, we are happy to discuss them with you!

- Regulation and Standards:

Governments and industry organizations should develop and enforce regulations and standards for responsible AI. These regulations should balance innovation with ethical considerations, fostering responsible AI adoption.

Conclusion

Responsible AI is not a choice but a necessity in our increasingly AI-driven world. Embracing the principles of fairness, transparency, accountability, and privacy is crucial to ensuring that AI continues to advance humanity’s interests and values. By working together to develop and implement responsible AI practices, we can navigate the future with confidence, harnessing the power of AI for the greater good while minimizing its potential risks.

Maria Barnes, President, Barnes Business Solutions, Inc. holds the certification “Azure AI Fundamentals” and is qualified to help you start your journey into AI. Contact mbarnes@BarnesBusinessSolutions.com or setup a FREE 30 minute consultation with Maria.